Presenters

Source

Decoding Kubernetes & HTTP/2: Why Default Load Balancing Just Doesn’t Cut It 🤯

Hello, tech enthusiasts! Today, we’re diving deep into a real-world Kubernetes incident that Mariem Sboui, a brilliant Site Reliability Engineer, shared at Com 42 SRE 2026. Mariem spends her days running and scaling Kubernetes platforms in production, making her insights invaluable. She walked us through a fascinating challenge: why Kubernetes’ default load balancing falls short for HTTP/2 traffic. Get ready to uncover the mysteries behind unexpected traffic patterns and learn how to build more resilient systems!

The Incident: A Sudden Performance Hiccup 🚨

Imagine introducing HTTP/2 traffic for the first time to some critical production services. What could go wrong, right? Well, Mariem’s team soon discovered a glaring issue: external users began experiencing latency. While not catastrophic, this wasn’t negligible – latencies increased by more than 300% milliseconds!

The team quickly noticed an unusual load pattern. Instead of an even distribution, one single backend pod was handling nearly all the incoming HTTP/2 traffic, while its siblings sat idly by. This was a head-scratcher. There were no resource constraints, plenty of idle capacity across pods and nodes, and even the Horizontal Pod Autoscaler (HPA) was functioning normally. Frustratingly, killing the overloaded pod didn’t even help; the traffic simply re-persisted on a single pod.

The team’s initial mistake? They didn’t anticipate HTTP/2 traffic behaving differently from HTTP/1 in a Kubernetes environment. This incident illuminated a critical lack of knowledge about how things truly work behind the scenes in Kubernetes clusters at a deeper level.

Unmasking the Culprit: Kubernetes’ Default Load Balancing & HTTP/2 🤯

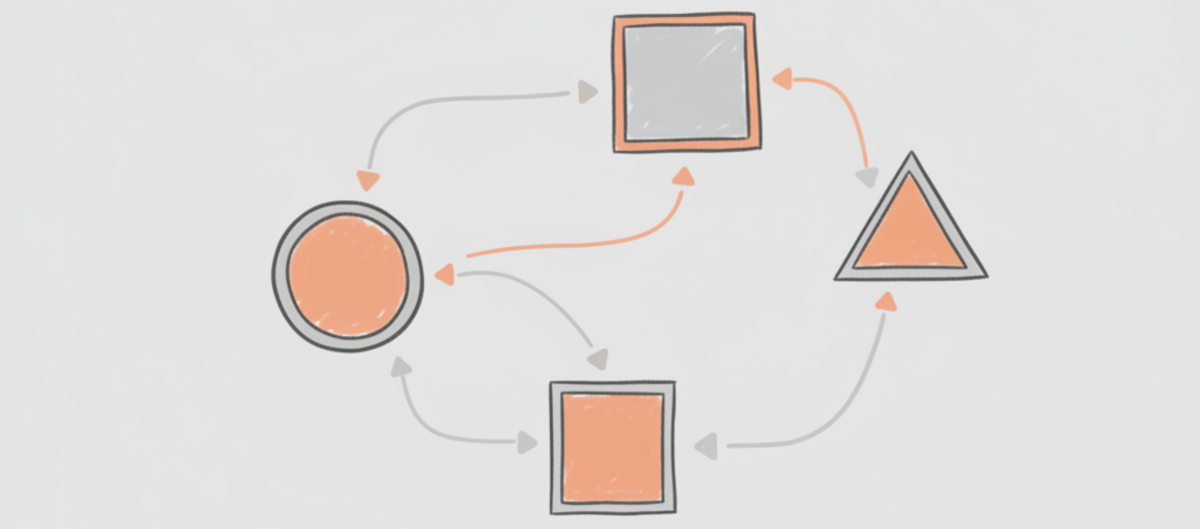

To understand the problem, we must first understand Kubernetes’ default load

balancing mechanism. By default, the kube-proxy component performs Layer 4

TCP load balancing. When a new TCP connection is established, kube-proxy

selects one single backend pod using a round-robin algorithm. Crucially, the

chosen pod then handles all requests on that specific connection.

Here’s the kicker: kube-proxy operates at the connection level, not the

request level. It doesn’t inspect individual HTTP requests within a

connection.

Why HTTP/1 Played Nicely (But HTTP/2 Didn’t) 🤝

This connection-level load balancing works perfectly fine for HTTP/1 traffic, and here’s why:

- HTTP/1’s typical behavior: Clients usually open multiple short-lived TCP connections. Each new connection is routed to a different backend pod, naturally distributing requests evenly across the available pods.

However, HTTP/2 completely changes the game by introducing request multiplexing over a single connection.

- HTTP/2’s game-changer: Clients can send multiple concurrent requests over one single, persistent TCP connection.

- The Impact: If

kube-proxyroutes this single, long-lived HTTP/2 connection to one pod, all subsequent requests multiplexed on that connection will go to the same pod. This leads directly to the observed overload, CPU throttling, and the frustrating latency users experienced.

The Immediate Fix: Application-Level Band-Aids 🩹

With an incident unfolding, Mariem’s team needed a swift, temporary solution to alleviate the performance degradation. Their focus was on application-level adjustments:

- Tuning application keep-alive settings: They tweaked these to influence connection behavior.

- Reducing connection reuse: For HTTP/2 clients, they actively reduced how often connections were reused.

- Increasing active connections: This pushed clients to open more connections, attempting to force better distribution.

These temporary measures did help improve traffic distribution, but Mariem emphasized that they were just band-aids – not a permanent, robust fix.

The Permanent Solution: Embracing Layer 7 Routing 🚀

The key to a long-term solution lay in moving beyond Layer 4 connection-based load balancing and embracing Layer 7 (HTTP) routing. This approach allows for load balancing decisions to be made at the request level.

There are several ways to achieve Layer 7 routing:

- A dedicated service mesh (like Istio)

- An HTTP/routing proxy

- An API Gateway

- An Ingress controller with request-level routing capabilities

Mariem’s team ultimately chose to introduce a service mesh, specifically Istio. This wasn’t a quick fix; the entire rollout project took approximately 6 months to complete.

By adding an HTTP routing layer, requests are now balanced based on their individual characteristics. The service mesh inspects HTTP traffic and makes intelligent routing decisions based on factors like:

- Request path

- Headers

- Configured routing rules

- Backend latency

- Backend health issues

This sophisticated routing ensures an even traffic distribution across all backend pods, regardless of persistent HTTP/2 connections.

Key Takeaways: Navigating the HTTP/2 Landscape 💡

Mariem’s incident provided crucial lessons for anyone running Kubernetes in production, especially with modern protocols:

- 🚫 Never Rely on Default

kube-proxyfor HTTP/2: When dealing with HTTP/2-based traffic, including persistent connections or gRPC protocols, do not rely on Kubernetes’ defaultkube-proxyload balancing. It’s simply not optimal for these use cases. - ✅ Introduce Layer 7 Load Balancing: For HTTP/2 and similar protocols, you must introduce Layer 7 load balancing. Tools like a service mesh (e.g., Istio), an advanced ingress controller, or an API gateway are essential.

- 📈 Scale Your Backend Pods: Always strive to have as many backend pods as possible. This provides ample capacity to distribute incoming traffic more evenly, even with sophisticated Layer 7 routing in place.

This incident is a powerful reminder that while Kubernetes provides incredible defaults, understanding the nuances of how protocols like HTTP/2 interact with its underlying mechanisms is paramount. Proactive design with Layer 7 routing can save you from unexpected outages and ensure a smooth, performant experience for your users.

Thank you for joining us on this deep dive! Stay curious, keep learning, and happy Kube-ing! ✨